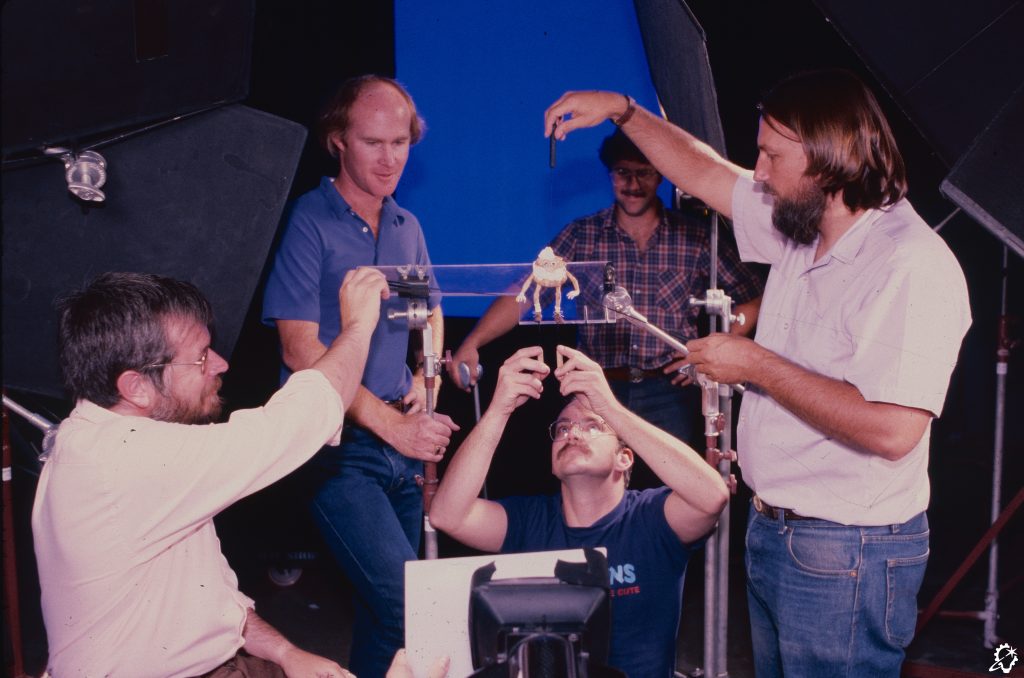

Visual effects supervisor Bill Georgiou and associate visual effects supervisors Stephen Tong, George Kuruvilla, and Arnab Sanyal discuss ILM’s visual effects contributions to the final season of Netflix’s hit series.

By Jay Stobie

The struggle against Vecna (Jamie Campbell Bower) rises to an epic crescendo in the fifth and final season of Netflix’s Stranger Things (2016-2025), as Jane “Eleven” Hopper (Millie Bobby Brown) and her friends seek out their foe in a bid to protect Hawkins from impending doom.

ILM visual effects supervisor Bill Georgiou and ILM associate visual effects supervisors Stephen Tong, George Kuruvilla, and Arnab Sanyal spoke with ILM.com to highlight Industrial Light & Magic’s visual effects contributions to all eight episodes of season five, which included crafting the gruesome membrane wall surrounding the Upside Down, creating the Demogorgon attack on the MAC-Z base, melting a room around Jonathan Byers (Charlie Heaton) and Nancy Wheeler (Natalia Dyer), and much more.

Accessing the Upside Down

“I was the ILM visual effects supervisor for Stranger Things, and I oversaw our global team,” Bill Georgiou shares with ILM.com. “Each ILM studio – Sydney, Vancouver, and Mumbai – was run by very talented associate visual effects supervisors – Stephen, George, and Arnab – who I worked closely with. I also worked directly with the clients, in this case, the Duffer Brothers and client-side visual effects supervisor Betsy Paterson, and I even spent almost two months on the set with them. ILM was initially awarded around 1,200 shots overall, spread across every episode.”

The scope of ILM’s shots was vast, encompassing a range of visual effects disciplines. Whether ILM was adding major environmental extensions to bolster the Hawkins set and provide a bird’s-eye view of the town, inserting spores throughout the Upside Down’s air, or working with assets like digital doubles, Demogorgons, and a massive vine creature, every department was involved. Given the breadth of ILM’s duties, the schedule and availability of the artists at ILM’s Sydney, Vancouver, and Mumbai studios determined who received which tasks. “I was the associate visual effects supervisor in Sydney,” Stephen Tong relays. “We did about 600 shots in Sydney, and I had the pleasure to be with Bill in the same office, which made things a lot easier.”“

ILM’s Vancouver studio did about 350 finals in total,” associate visual effects supervisor George Kuruvilla notes about his site’s responsibilities. “While Bill sleeps, we get everything else ready on this side of the world [laughs].” Associate visual effects supervisor Arnab Sanyal, whose site is ILM’s newest addition, reports, “I managed the team here in Mumbai, and we got over 250 shots. When ILM’s Mumbai studio began in 2022, many of us came to ILM from other studios. Our team was growing, so the initial projects we did consisted of basic-level work. However, year after year, our projects are getting more and more complex, which shows that the more established ILM sites have trust in us. We’re always doing something interesting, and that’s the best part. As a creative, that is the type of assignment that we look for.”

A Memorable Membrane Wall

The seemingly infinite membrane wall surrounding the Upside Down became an asset that embodied ILM’s global approach to the project. While production had built a portion of the wall on set for the actors, ILM needed to extend the otherworldly barrier into the vast unknown and modify it for multiple scenes. Although the digital asset was built at ILM’s Sydney studio, the other two sites needed to alter it for their own shots. For example, while Vancouver focused on Steve Harrington’s (Joe Keery) car striking the wall, Mumbai handled the sequence where Eleven and Jim Hopper (David Harbour) fight the army at its foundation.

The Sydney studio tackled the wide establishing shots of the wall, as Tong explains, “We needed the wall to look good in wide, medium, and close-up shots. When we do big environments, you normally think of hard surfaces, like mountains and rocks, but this was more of an organic, fleshy wall. There was liquid running down the wall, and then we needed to tear it and heal it – George’s team had to put a BMW through the wall and do all sorts of creative things.”

Georgiou concurs, recalling, “There was so much look development time put into that wall. We started with a fleshy concept that the client provided. We looked at all kinds of medical reference, and it had to have a very visceral, human feeling to it. We worked on the wall for about six months, if not longer, and based it on human tendons, veins, and pustules. All of the work that we did was grounded in reality in one way, shape, or form. Our references were quite gross [laughs], and our texture and look dev artists had to look at the worst of it all. It was pretty horrific at times. The wall had to have a subsurface component as well because the characters were shining flashlights onto it, and we needed to see light disperse through it.”

“In dailies, when we talked about some of the liquids, we used food as reference because people know the difference in viscosity between honey and blood, for example,” Tong reveals. Turning to the hole that is eventually torn into the wall, Kuruvilla echoes Georgiou’s observations about the grotesque nature of certain references, saying, “The client requested the wall to feel like it had been ripped, so we looked at photos of the inside of a whale. The edges needed to feel like a butcher’s knife had gone through it.”

Mumbai set its sights on another section of the wall, where Eleven and Hopper take refuge behind a billboard before clashing with the army. “The main asset for the membrane wall came from Sydney, but our angle of the wall was slightly different from theirs, so we had to work on the asset for our shots. There is a massive amount of detailing that normal viewers might not notice,” Sanyal opines. One such component even consisted of a soldier urinating on the membrane wall, which meant that, as the ILM team recalls with a laugh, they needed to run effects simulations so that the liquid’s properties lined up precisely with how the filmmakers wanted it to appear on screen.

The Void and a Vine

After Steve Harrington’s BMW collides with the membrane wall, a thrilling sequence sees the car pulled through the opening and out into a nebulous void. “We started with a full CG shot where the camera moves through the hole in the membrane wall, and when it goes onto the other side, you’re in the void and look up to see a different scale of the wall,” Kuruvilla remarks about the Vancouver studio’s approach. “The challenge is the scale because on one side of the wall you see Jonathan and Nancy standing in front of it, and you sense that it is infinite – but you don’t see it go on forever.”

Georgiou agrees, acknowledging, “Scale was so important. The BMW is literally flying through, and the camera’s following it, but then, as the camera turns around, we’re supposed to see the ‘inside out’ version of the wall. The level of detail was quite high, but the detail scale had to be quite small so that it felt enormous and as if our camera had traveled a huge distance away to be able to see the entirety of this wormhole-shaped wall.”

When the time came to work on a vine creature from the Upside Down that has been captured by the military, ILM sought to keep the visual language consistent with the membrane wall. “This massive vine had to have its own character. Vines are particularly tough to do, especially when they have to wrap around an actor. In this case, it had to wrap around Hopper’s neck and lift him up. We spent a lot of time in look development to get the vine to be realistic and have similarities to the wall with pustules and a mucus covering,” Georgiou divulges.

The vine’s interaction with Hopper’s neck was a notable obstacle, as the character’s attire and features acted as hurdles to making that interaction believable. “They filmed the shots using a pool noodle around David Harbour’s heavily bearded face and neck as he was suspended from the ceiling,” Georgiou describes. “To keep the vine alive, we were required to add additional motion and rotation which meant that both his clothing and long hair and beard would need to move and interact with the vine. We meticulously match moved and look-deved the actor with a digi-double and created a groom for his hair. Both the hair and the clothing were then simulated to allow us to get the pressure of the vine’s squeeze and shadows from the various set lights onto David.”

“On top of that, the shots were very difficult to integrate for compositing,” Tong asserts. “The on-set lighting had many spinning lights off-screen. It was a hazy environment, and the atmosphere changes in every frame as the particle light spins. So, when you integrate something with the plates in those lighting conditions, you have to be careful to match those levels and the haziness.”

MAC-Z Mayhem

Another entity essential to the Upside Down was Stranger Things’s legendary Demogorgon design. Wētā FX upgraded the Demogorgon model from previous seasons and passed it to ILM for their own Demogorgon sequences, where the team then modified the creatures for an attack on the MAC-Z military base in the center of Hawkins. “The Demogorgons are iconic to the series, and it was so cool to work on them and their look dev,” emphasizes Georgiou, whose tenure on set was largely devoted to overseeing this climactic battle, which gradually results in the Demogorgons becoming dirtier, bloodier, and even burned. “I will never look at barbecued chicken the same way,” Georgiou jokes about the reference ILM used for the burned Demogorgon.

ILM placed blood maps on the Demogorgons’ arms, legs, and chests to reflect the carnage they wrought, as ILM’s sequence required the team to supply a different type of Demogorgon performance than the show’s other vendors. Whether the Demogorgons are lifting or stomping the soldiers they faced, it was necessary to have simulations for skin sliding and muscle movements. The effects team added drool and blood, while impact points were created so the animation department could depict dents in the Demogorgons’ skin where bullets were impacting them.

“Our animation team was incredible, as both Mumbai and Sydney did a fantastic job with getting the physicality and weight for the Demogorgons,” Georgiou proclaims before expressing his joy over seeing ILM animators film themselves lumbering, slashing, and roaring to provide reference, commenting, “For creatures that are eight-plus feet tall and have extremely long arms and claws, with enough strength to jump across entire sets, they worked really well.”

As the fight unfolds at the MAC-Z, Demogorgons also pursue children through an underground tunnel. “Mumbai had those Demogorgon shots,” Sanyal explains. “The Demogorgon’s animation and the way it moves were not easy things to do because it was not human-like, but not truly animal-like either. When Will Byers [Noah Schnapp] uses his telekinetic power, we had to determine how the Demogorgons would react, shake, move, and finally break.” Tong also beams about reviewing the shocking revelation in dailies, reminiscing, “When Will stopped the Demogorgon from killing Mike Wheeler [Finn Wolfhard], I knew this scene was going to make fans jump on the sofa and scream. It’s so iconic and unexpected.”

Demogorgon movements weren’t the only delicate aspect of the tunnel scene spearheaded by the Mumbai studio. “We tackled three or four rifts within the tunnel, with the most intricate details in the closing one,” Sanyal states. “Imagine a spider drawing an insect into its web – that’s how a child was being pulled into the rift, and the entire web reacted to their movement. Our FX team handled this unique and challenging work, resulting in a truly amazing final output!”

An On-Set One-Shot

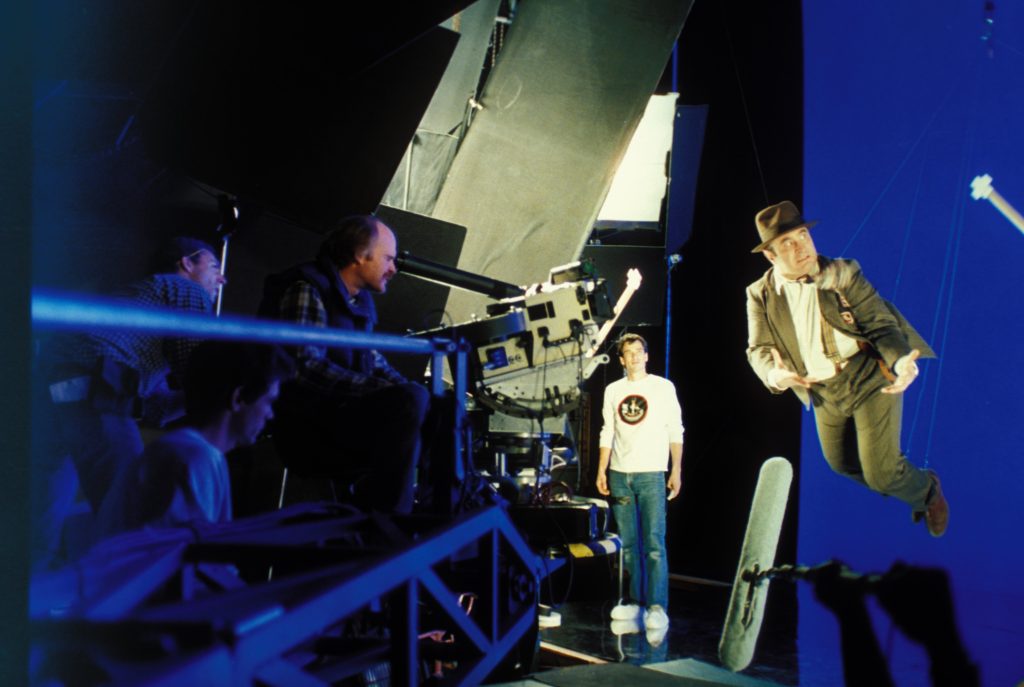

Speaking to the time he spent on set in Atlanta for the MAC-Z battle, Georgiou elaborates, “Being on set was wonderful, and it was the only way for me to be able to understand the scope of that sequence. Production built this tremendously huge 360-degree set where they had shipping containers stacked seven or eight high, covered in blue screen for the set extensions. There were over 100 extras and 150 lights from every direction flashing on set. I was there early to watch the rehearsals and see the choreography of how it was going to go down. The Duffer Brothers were kind and generous with their help and questions, and they asked our opinions too.”

A lengthy shot in which Mike Wheeler and his companions attempt to avoid the chaos as Demogorgons wreak havoc on the base was done in a continuous take and represents one of the primary reasons for Georgiou’s presence on set, as he outlines, “The oner was a huge accomplishment. It was filmed handheld and with a fast shutter speed, so it looked like war journalism footage.”

As delicate as the on-set choreography was, ILM’s postproduction work was equally taxing. “Everything had to line up on set, but then it took a long time to put the layout all together. We had to animate, light, and comp on top of the plates. The 100-plus soldiers have muzzle flashes, their guns are emitting shells, the bullets create dents as they hit the Demogorgons’ skin, the Demogorgons have breath and their bodies are sweaty – there’s so much detail put into the oner, and into the MAC-Z sequence as a whole, and it went off without a hitch.”

Extending an Exterior

A second military base resides in the Upside Down, and Eleven infiltrates it by leaping over its perimeter. A set was constructed on a soundstage, but ILM needed to build a large extension for the base’s exterior and connect it to the set piece. “We started with the asset and did a significant extension of the base,” says Sanyal before turning to an intriguing fact about ILM’s contributions to the scene. “When Eleven is running prior to her jump, there are vines all around. We made sure the vines were properly placed and physically accurate from one shot to another in order to maintain continuity.

“Once Eleven jumps, she lands on a practical glass roof, but many shots in that area were completely CG,” Sanyal continues. “To integrate those shots, everything had to be balanced so the CG portions looked like the same type of glass roof that she jumped onto. It was an exciting sequence to work on. The military base asset was originally created to be smaller, so we rebuilt on top of it. There was barbed wire and multiple spotlights around it, and we wanted the lighting to pick up those interesting highlights.”

A Melting Menace

In another intense scene, Nancy and Jonathan become trapped in a room within the Upside Down’s version of the Hawkins laboratory as the facility essentially melts around them. Although this was filmed on set, the client determined the gray material they had used for sludge was too thin and had surface air bubbles that made it appear too much like water. The Vancouver studio was tasked with replacing that practically-filmed liquid with a substance even thicker than house paint.

“We were concerned about continuity and coming up with the reality, weight, and viscosity of the fluid that the characters were moving through. They were walking through it and pushing their hands through, so there was interactivity that was happening. The fluid needed to feel thick and viscous, and ultimately every background – the walls and floor, and even the surface of the table that Nancy and Jonathan were sitting on – was completely replaced,” Georgiou discloses.

Kuruvilla shares Georgiou’s perspective on the matter of ensuring that the melting remained consistent, stating, “One of the things that I found most challenging was keeping continuity across the shots while the walls were melting. When you’re replacing a fluid and matching something that’s real, you’re matching a physics-based simulation and taking it over in CG. Blending between CG and the plates is the hardest part.”

Upside Down Destruction

The melting room’s strange properties are caused by an energy sphere atop the Hawkins lab. The client-supplied brief described the sphere as having an outer shell that cloaks its interior core, but exploring the specifics was left to ILM. “One of the amazing things about this client is that we weren’t just a vendor – they were looking for partners in creating and building these otherworldly things,” Georgiou begins. “ILM had a spectacular concept artist in [visual effects art director] Amy Beth Christenson, who created the first images of the sphere and the sphere breaking apart. That art served as our inspiration for our two years of working on the show. From there, the effects team looked at cellular structures and human body-heavy references.”

In time, the sphere explodes, unleashing enough energy to wipe out the Upside Down, including the membrane wall. “An energy wave hits the membrane wall, and Vancouver was involved in that destruction. The wall is such a large and tricky asset – it’s so detailed, and it has effects like mucus and other elements that run over top of the wall,” Kuruvilla professes. “Destruction-wise, our effects teams did simulations on the wall, and we built veins, membranes, and fleshy pieces inside the wall to accentuate the break and tear as the energy hit it.”

The catastrophic demolition strikes many familiar landmarks in the Upside Down. “The Vancouver studio built the Hawkins High School. There’s the school and a packed car park, so we researched which types of cars were there at that point and kept it as faithful to the references as possible,” Kuruvilla continues. “Our last build was the Wheeler house, and our artists went through images from seasons one through four. They got drawings and plans of the set to figure out where the basement would be and what the wallpaper was. Where the kids play their games, the artists put up the same posters and all sorts of references from the show for when the house would be destroyed.”

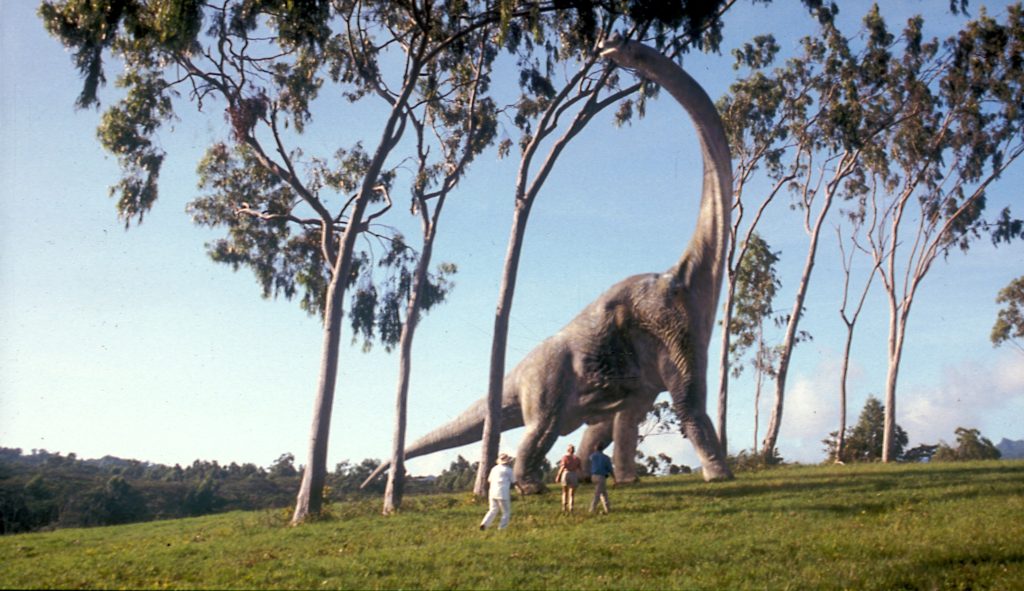

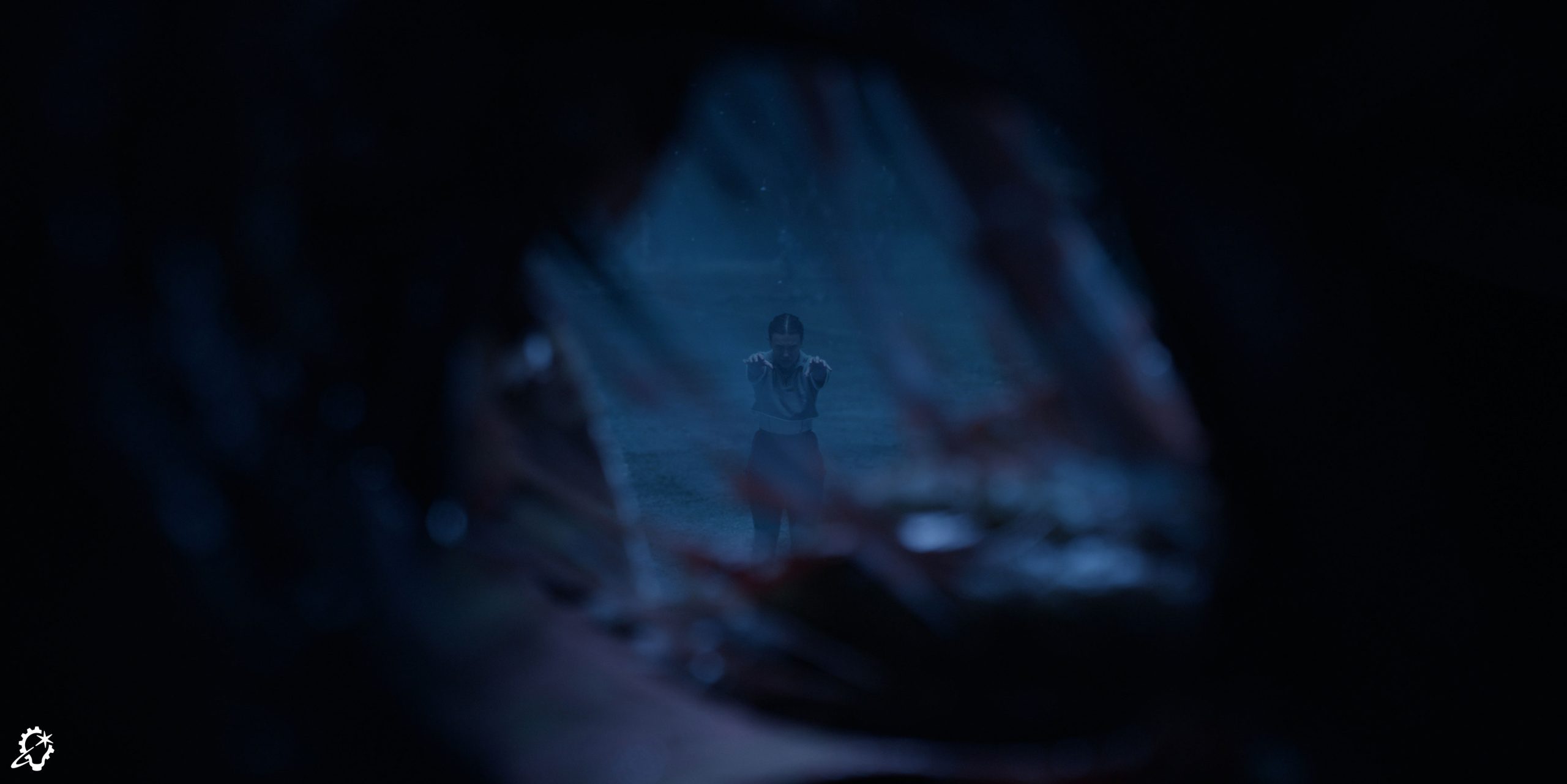

The sphere’s explosion reaches a heartwrenching conclusion at the rift gate linking the MAC-Z base to the Upside Down, where Eleven stands defiantly as the rest of the Upside Down is being torn down behind her. “ILM’s Sydney studio did some of the destruction behind Eleven in that last emotional scene. The look of it came from the effects team, and we were very grateful. Many of our team members are fans of the show and wanted to add stuff that they think is cool and appropriate to the world of Stranger Things,” Tong affirms.

Spore Wars

While sensational effects tend to get the majority of fans’ attention, even the subtle spores residing in the Upside Down’s air throughout the season provided ILM with an opportunity to work its magic. “The spores have their own individual rotation, so we built them like a ravioli to have some width and thickness in their center. This way, when they rotated toward the camera, you wouldn’t lose them as if they were a single plane. That rotation, plus any camera motion, became challenging because it could quickly start to look too much like snow or as if we’re traveling through hyperspace in the Millennium Falcon,” Georgiou concludes.

The filmmakers art directed the spores’ placement and movement, guaranteeing each scene contained the right amount of spores while avoiding any errant spores falling in undesirable locations. “Since the spores are transparent, our work depends on what the back plate is. There’s no set formula to say we’re going to do, for example, 70% opacity and have it work across the whole shot – it’s not going to be like that. If the plate is too dark or too light underneath, we have to manually change the spores’ opacity or the sharpness of the edges to work with the plates.”

Sanyal hones in on the troubles inherent with lighting the spores that ILM’s Mumbai studio inserted into Eleven’s battle with the army near the billboard, imparting, “There are spores all around, and we need to consider how they will react to every light. The soldiers carried guns, and each gun had a light source attached to it. Every time the guns move, the spores in that area need to react to it. Most people don’t realize how much effort goes into our work. When you genuinely dive into the levels of detail that are required, it’s incredible. The spores are so minute, but their behavior and movements are specific. Spores kept us on our toes for a long time [laughs].”

A Worldwide Wonder

The smooth collaboration across ILM’s Sydney, Vancouver, and Mumbai studios on the final season of Stranger Things demonstrates the extent of ILM’s capabilities as a global visual effects powerhouse. “This show is a great example of ILM’s cross-site workflows. Our supervisors and teams all worked together. The level of communication was terrific, and that can be hard – you can have too much conversation, where people are talking and not enough is getting done. At the same time, you can have too little, where things get missed and extra time is needed to solve something. But on this show, right from the start, we were so well-aligned and efficient,” Kuruvilla attests.

“Our three sites worked together so well,” Tong adds. “Production expertly split the work between us, establishing the schedule of who developed what and how these assets would come together in a way that each studio could deliver their work on time. Bill is always so nice to work with and accessible, especially when his office is next to mine [laughs]. If I have questions or anything I want to show him, I can get feedback or approval as soon as possible, so the team can keep going and get the shot done.”

“This sort of collaboration is what ILM is all about. In almost every show we take on, we are working with multiple ILM sites,” Sanyal observes. “We received so much support from the Sydney studio – my CG supervisor, Kunjal Dedhia, stayed in continuous touch with theirs because there were assets that we were handing over to each other. The collaboration we see at ILM is important, and it’s unique compared to other places I’ve worked. Everyone here feels part of the same team and shares the goal of delivering a show to the best of their ability – the only difference is the time zone.”

For Georgiou, having the role of overall ILM visual effects supervisor meant stepping back at times to allow his associate visual effects supervisors to lead their respective studios. “I actually had to pull myself out of being as involved with the Sydney team because I was so used to being as available as possible, and I needed to let Stephen – and George and Arnab, as well – work with their teams on their own and build those relationships. They are so smart and gifted, and watching them encourage and support their artists blew me away,” Georgiou avows.

Kuruvilla praises the range of tasks ILM completed this season, saying, “The sheer volume of work that ILM took on for this season was astounding. We did such an immense number of varied shots and sequences with different assets – there was so much cool stuff in one show, and it was so rewarding to be a part of it.” Tong confirms, specifying, “The visual effects are so diverse. Digital doubles, environments, big effects, plate-based work, full-CG work, characters – it’s all there.”

Of course, confronting such monumental assignments is what ILM thrives on, as Georgiou contends, “Working on Stranger Things felt like having Christmas morning every day – waking up and opening shots from Vancouver, and then looking at the new shots in our daily sessions from Sydney and Mumbai. It was a joy.”

–

Jay Stobie (he/him) is a writer, author, and consultant who has contributed articles to ILM.com, Skysound.com, Star Wars Insider, StarWars.com, Star Trek Explorer, Star Trek Magazine, and StarTrek.com. Jay loves sci-fi, fantasy, and film, and you can learn more about him by visiting JayStobie.com or finding him on Twitter, Instagram, and other social media platforms at @StobiesGalaxy.