Members of ILM’s visual effects team discuss their cutting-edge approach to crafting a scene from the Emmy-nominated Skeleton Crew through their real-time rendering pipeline.

By Jay Stobie

Industrial Light & Magic’s visual effects capabilities have been synonymous with innovative approaches since the company’s inception, as ILM creatives regularly transform theoretical techniques into groundbreaking developments that emerge as everyday solutions. One such forward-thinking application involves ILM’s use of real-time rendering to present their work to visual effects supervisors and client filmmakers in an immersive fashion that allows them to immediately incorporate the feedback they receive.

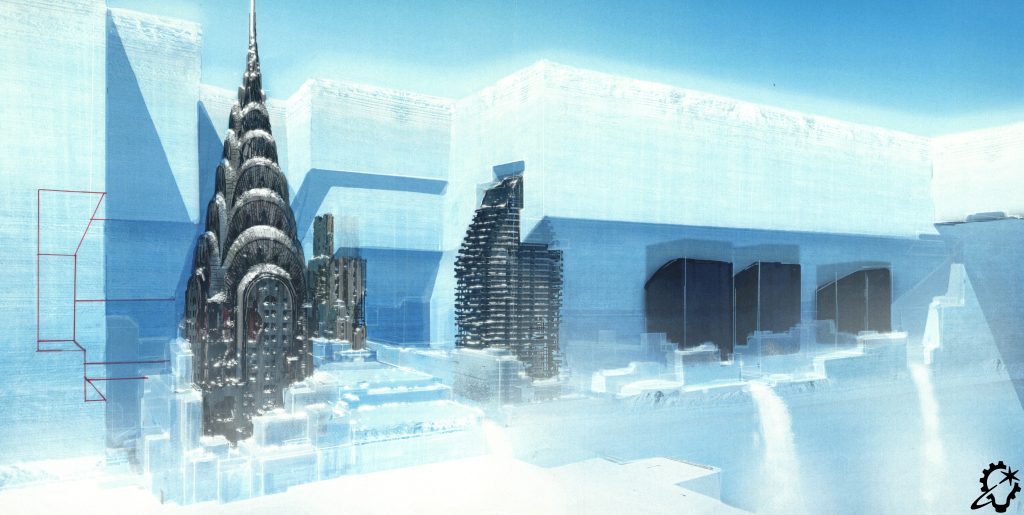

This process was utilized in “Zero Friends Again,” the sixth episode of Star Wars: Skeleton Crew (2024-25), for a sequence depicting Fern (Ryan Kiera Armstrong) and Neel (Robert Timothy Smith) as they ascend a ladder above the snowy plains of the planet Lanupa. A roundtable of ILM team members, including real time principal creative Landis Fields, environment supervisor Andy Proctor, technical artists Will Muto and Kate Gotfredson, and lead compositor Todd Vaziri, joined ILM.com to chat about trying out a new real-time workflow to craft the depth-defying ladder-climbing sequence in Skeleton Crew.

An Interactive Overview

“In traditional visual effects, artists show their work in dailies as a 2D-rendered movie and get feedback,” says Andy Proctor. “They work on those notes, re-render it, and present it again, usually the next day.” However, real time permitted Proctor and his colleagues the chance to incorporate some of their earlier virtual production workflows to achieve an interactive review process. “I was in the virtual art department on Skeleton Crew, and we would do dailies where the key creatives would be in VR headsets while looking at these environments. To build the actual sequence, we had the entire thing set up in real time for the creatives to view on a normal screen. All the plates were loaded, and the blue screen was keyed out in real time. It’s essentially the same as when the visual effects supervisor is looking at a regular 2D review, except the whole scene is live.”

The planet Lanupa environments for those Skeleton Crew reviews were drawn from material originally created for ILM StageCraft’s virtual production pipeline, as Todd Vaziri highlights, “There are extended sequences where the actors were filmed for this environment on the ground level that were built by our StageCraft crew. There were already rounds of art direction, design, and construction, and that had to be approved by the filmmakers before first unit filming began with the actors. They would get in-camera finals using all of our StageCraft LED technology and real-time rendering technology. All of that work, especially on the creative side, had been done.”

Roguish Roots

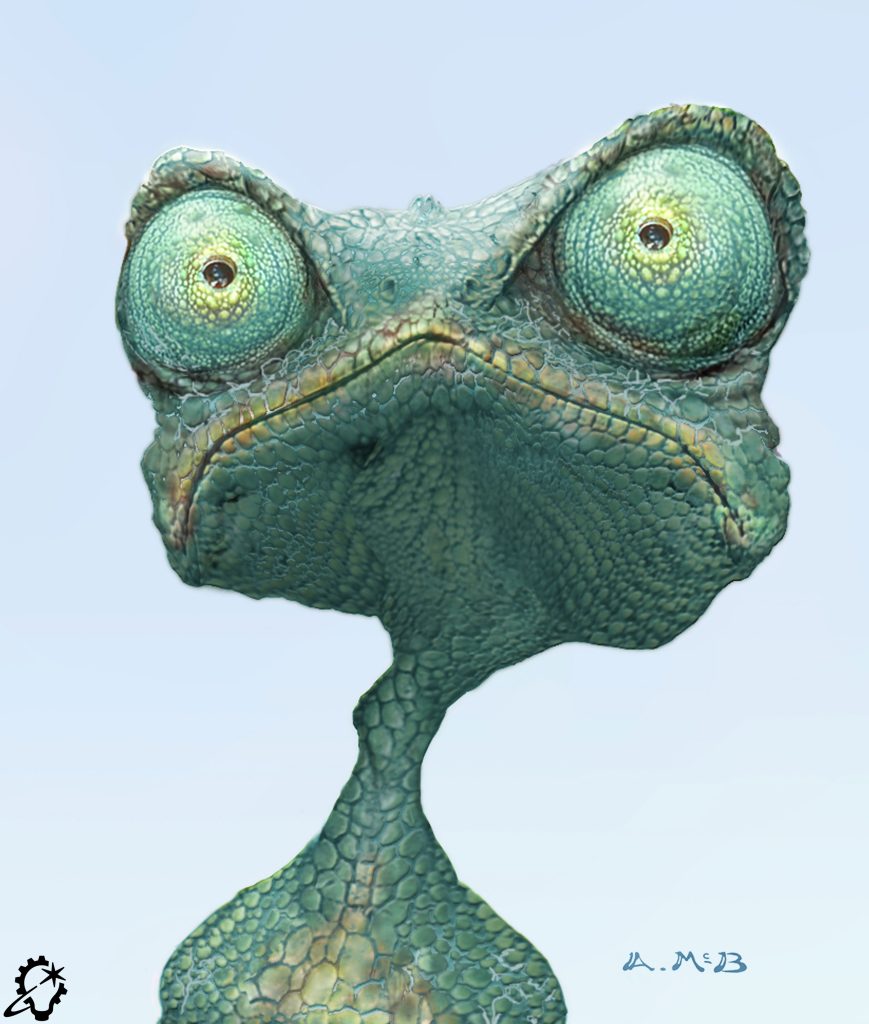

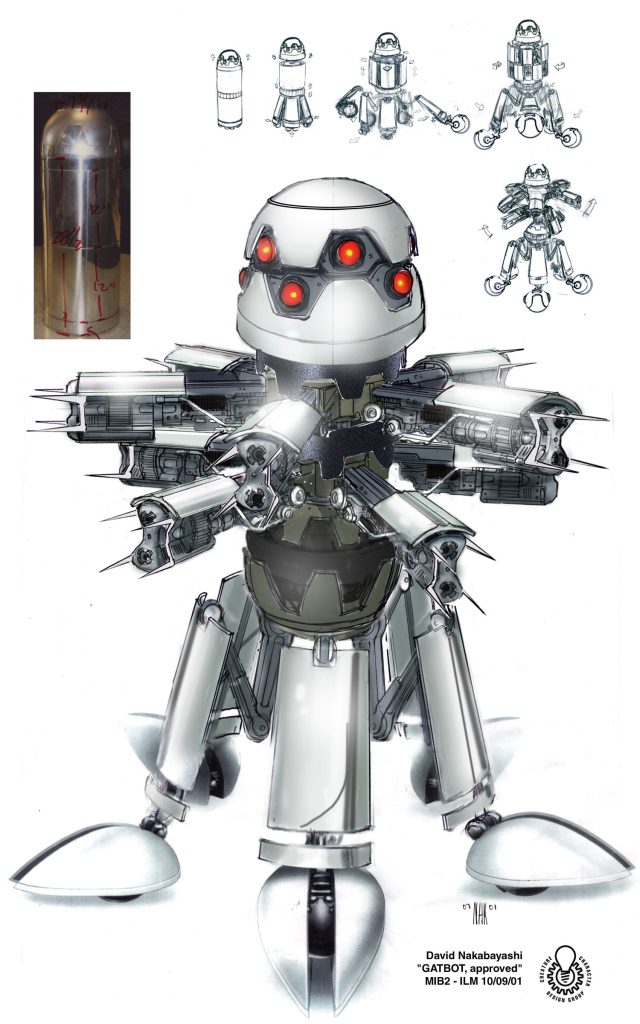

Looking back to the origins of these real-time elements, Proctor points to ILM’s proof-of-concept contributions to the creation of K-2SO in Rogue One: A Star Wars Story (2016), also overseen by Skeleton Crew production visual effects supervisor John Knoll, as “a technical milestone for real-time visual effects,” which had usually been reserved for games and interactive projects during that time period. “You skip ahead to Skeleton Crew, and now you’ve got much more of a crossover, because we’re using real time to design the environments and work out how they’re going to be shot.”

The Mandalorian’s (2019-23) season three finale followed Rogue One as the next benchmark on the path to the real-time process harnessed for the Skeleton Crew cliff climb. “[Skeleton Crew] represented the natural progression of working with [executive producer] Jon Favreau on The Mandalorian, because we were always pushing the boundaries,” Landis Fields notes, as ILM’s use of StageCraft’s LED-based volume prompted them to lean into virtual production techniques for a variety of different disciplines. “On the volume, you have a real-time environment, and it’s working for in-camera finals. So there’s already a step towards what you’re doing in real-time being what you’ll see in the final picture,” Proctor chimes in.

In The Mandalorian “Chapter 24: The Return,” ILM chose the astromech droid R5-D4’s descent into the cavern housing Moff Gideon’s (Giancarlo Esposito) secret Mandalorian lair to exercise the most recent real-time advances for the scene’s final pixel shots. “We had done that years ago on some of the K-2SO shots for Rogue One, but real time had changed a lot since then,” Fields adds. “On The Mandalorian, we were able to test integrating real-time visual effects reviews with [visual effects supervisor] Grady Cofer and [animation supervisor] Hal Hickel.” Instead of simply giving notes for changes that would be made at a later date, the supervisors could quickly see the impact of their requests for lighting changes and other alterations.

A New Scope

ILM’s ability to successfully demonstrate the viability of that real-time process was met with an immense wave of support, as Fields credits Jon Favreau, John Knoll, head of ILM Janet Lewin, and Lucasfilm’s Rob Bredow, for being strong proponents of continuing on the cutting-edge course. Nevertheless, Fields emphasizes that this approach was intended to be one of many tools on which they could draw, as the choice of which technique to pull from ILM’s ever-growing arsenal of production pipelines would always come down to “the right tool for the job.” While the majority of the StageCraft volume LED in-camera work for Skeleton Crew was done using ILM’s proprietary Helios renderer and engine, this particular sequence was an opportunity to also see where the use of real time could be pushed and leveraged in novel ways.

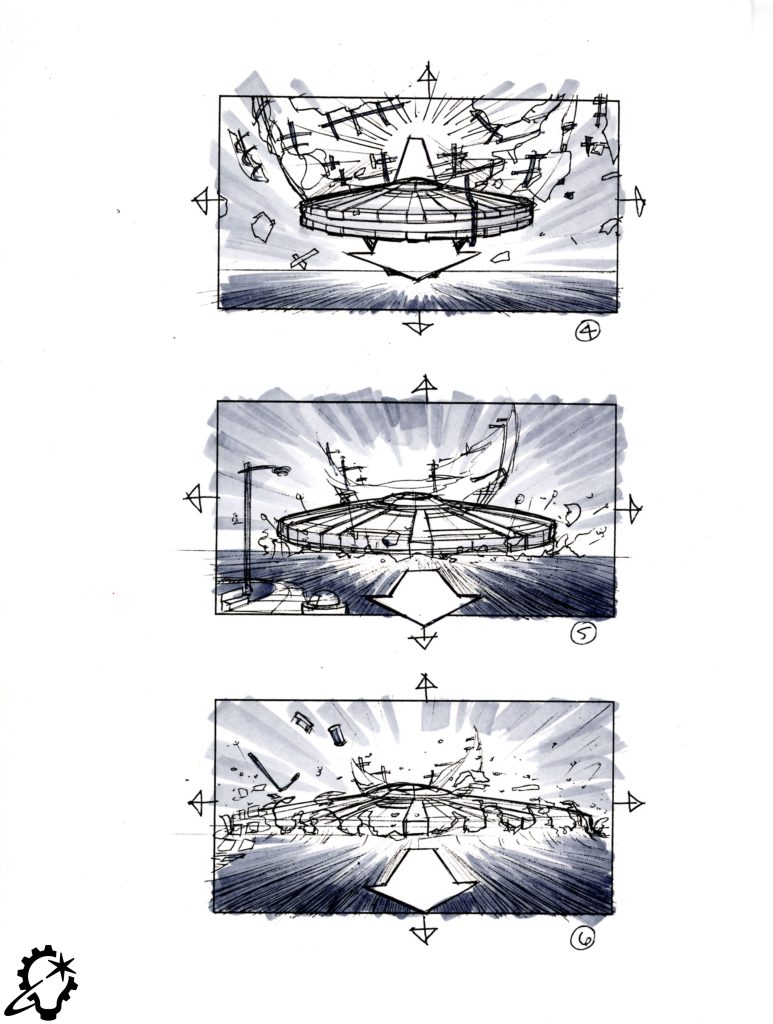

Perceiving The Mandalorian’s season three finale as a major real-time stepping stone, Proctor recalls that ILM elected to expand its use to an even greater extent, as he posits, “Now, we’re going to take a sequence and cut it in among live action that was shot in the volume and other traditional visual effects that are rendered offline. It has to match the other sequences and be as visually complex as everything else.”With this real-time production workflow firmly in place when ILM’s work on Skeleton Crew commenced, Proctor recalls the ladder-climbing shot from “Zero Friends Again,” saying, “We knew it was an important moment, because it establishes that Neel is scared. Kate Gotfredson was able to set the shot up in real time so we could do a dynamic height or vertigo wedge.”

This arrangement enabled them to consult with John Knoll and ILM visual effects supervisor Eddie Pasquarello in real-time, experimenting with a variety of elements, from pushing the background forward and away to tweaking the lighting. Speaking to the capacity to review several shots in a row in a single cut with per-shot interactivity, Vaziri adds, “We had a mini-editorial cut with works in progress. Being able to show an entire sequence to the visual effects supervisors and saying, ‘Yeah, this is how it’s going to look, but we can interactively move things around and instantly see in the context of the cut,’ that’s a game changer right there.”

Praise for the Process

The benefits of utilizing a real-time production pipeline are as diverse as the galaxy far, far away that ILM has built on screen. “Real time is very flexible. We were able to develop custom real-time compositing tools very quickly using blueprints, which allowed us to preview the live-action footage directly on top of our environments. With these tools, we could experiment with framing, set dressing, and lighting with immediate feedback,” Kate Gotfredson outlines.

Will Muto offers his appreciation for the interactive workflow, surmising, “You get more bites at the apple. The creatives are able to iterate more and hone in on what they want. That’s where the power is here.” Muto applauds ILM’s real-time process for its smoothness, continuing, “There were no huge surprises. We added tooling around our color pipeline to apply our shot grades within the real-time minicut, so we were certain that artists working real time were viewing plates in the exact same context that the compositors would be viewing downstream. The showrunners [Jon Watts and Christopher Ford], John Knoll, and Eddie Pasquarello all got what they wanted in extremely short turnarounds.”

Fields echoes the praise for the collaborative efficiency, relaying, “In the traditional pipeline, having a review is not just jumping on a call. There’s time that an artist has to dedicate to preparing material to review.” With real-time sessions, Fields divulges that his team can simply “throw together a meeting, and everyone joins the call. There was no prep other than that I had to be at my desk.”

ILM’s ‘Personnel’ Touch

Proctor articulates an unexpected advantage that has a habit of emerging from those video calls, when his team could share a screen and jump right into a real-time session. “You get more moments of serendipity. When someone is showing their work, you can see what they’re doing live and interact with it yourself. By doing that, we’d get these ‘happy accidents,’ where someone was playing around with the water shader and hit a parameter that made everyone say, ‘That looks amazing!’ because suddenly the water felt incredibly translucent. Those collective learning moments happen all the time, and it’s very difficult to get that any other way than in real time.”

The human element also factors into another attribute unique to ILM, as the unprecedented level of professional experience concentrated within the company’s ranks allows its personnel to maximize the real-time workflow’s potential. In terms of oversight, ILM’s senior staff have the expertise to recognize how their colleagues’ talents could be leveraged for optimum efficiency. “Where do you want the masters of these crafts to spend their time? Andy and I were very keen on paying close attention to who was doing what,” Fields remarks. “It’s not about everybody doing everything. That’s another part of working within the pipeline and being smart about the division of labor.”

Applying environments created for the volume in the real-time review process gave some ILM artists the chance to work across multiple stages of development, as well. Once Proctor had finished with the set design alongside his colleagues in the virtual art department, content creation supervisor Shannon Thomas oversaw the creation of the final environment used in the StageCraft volume. Digital artist Nate Propp contributed to both the volume build as well as the real-time work in post-production, for which Proctor then returned to help oversee.

“Not only was Andy familiar with the worldbuilding exercise that he had done,” notes Fields, “but he was familiar with it from the ground-level perspective.” When the real-time crew began on the ladder shots that looked down on Lanupa’s surface, they could rely on Proctor’s insights from his role in crafting that environment. “Andy knew what the environment was supposed to look like,” Vaziri agrees, indicating that his work as the lead compositor involved the important task of exposure balancing for the foreground which meant “a lot of rotoscoping, compositing for the extractions, a tiny bit of effects renders for some blowing mist that went through the environment, a lot of stock stuff that the compositors put in. From our perspective, we had to deal with very few renders overall, which I absolutely loved.”

The Legacy Ahead

“Back in the day, when they did K2, that was about the ‘if.’ Skeleton Crew wasn’t about if we could do it. We knew we could do it,” Fields summarizes. “To be able to see that sequence in the cut, scrub, and get valuable notes that are efficient with the time from the visual effects supervisors was huge. The review was essentially a three-dimensional, real-time composite set that we could move around.” Perhaps the greatest testament to the real-time process is the response its use garnered from individuals throughout the company, as Fields shares, “Everybody outside of our phase, the other departments downstream, started to see the value here.” This particular real-time workflow has joined ILM’s illustrious array of visual effects pipelines, becoming yet another evolutionary tool to be called upon when ILM is deciding which approach is best suited for the visual effects shot it is tackling that day.

—

Jay Stobie (he/him) is a writer, author, and consultant who has contributed articles to ILM.com, Skysound.com, Star Wars Insider, StarWars.com, Star Trek Explorer, Star Trek Magazine, and StarTrek.com. Jay loves sci-fi, fantasy, and film, and you can learn more about him by visiting JayStobie.com or finding him on Twitter, Instagram, and other social media platforms at @StobiesGalaxy.

Read more about Star Wars: Skeleton Crew on ILM.com:

‘Star Wars: Skeleton Crew’: ILM’s Visual Effects Treasure Chest, From At Attin to Starport Borgo