The ILM visual effects supervisor speaks on ILM’s contributions to the blockbuster film that brought Marvel’s First Family into the Marvel Cinematic Universe.

By Jay Stobie

Marvel Studios’ The Fantastic Four: First Steps (2025) transports audiences to the Marvel Cinematic Universe’s Earth-828, where Reed Richards (Pedro Pascal), Sue Storm (Vanessa Kirby), Johnny Storm (Joseph Quinn), and Ben Grimm (Ebon Moss-Bachrach) must prevent Galactus (Ralph Ineson) and his herald Shalla-Bal (Julia Garner) from destroying their entire planet. Directed by Matt Shakman, whose acclaimed credits include helming episodes of the long-running comedy series It’s Always Sunny in Philadelphia (2005-Present) and the mystical Disney+ hit WandaVision (2021), The Fantastic Four leans into a retro-futuristic aesthetic that blends 1960s-inspired designs with out-of-this-world technologies.

With this innovative endeavor in mind, the filmmakers called upon Industrial Light & Magic and its accompanying half-century of visual effects expertise to help execute Shakman’s vision, with a particular focus on The Thing, Galactus, the climactic third act battle in New York City, and more. Daniele Bigi (Ready Player One [2018], Star Wars: The Rise of Skywalker [2019], Eternals [2021]), who served as the ILM visual effects supervisor on The Fantastic Four, sat down with ILM.com to discuss the company’s numerous contributions to the project, from devising a fresh approach for portraying The Thing’s rocky features to constructing Earth-828’s distinctive New York City skyline.

An ILM Overview

As the ILM visual effects supervisor on The Fantastic Four, Bigi spearheaded ILM’s involvement on the project from the company’s London studio, working closely with invaluable colleagues like ILM animation supervisor Kiel Figgins and ILM senior visual effects producer Claudia Lecaros. “In this case, ILM didn’t split the work between multiple ILM facilities, so my team ended up keeping all the asset and shot work in London. We were assigned the major task of handling the third act of the movie, which centered on the final battle between the Fantastic Four and Galactus,” Bigi tells ILM.com. “Although it’s divided into multiple sequences, the third act is a continuous narrative from Galactus’s arrival on Earth through the end of the film. It was a fascinating and important piece of work to deal with.”

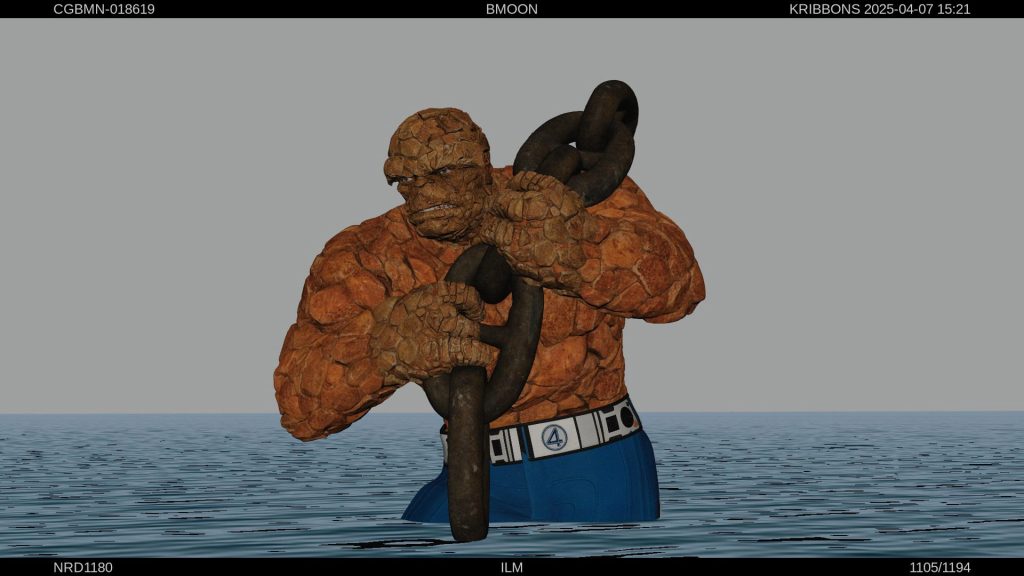

ILM’s assignment included devising an innovative look for Ben Grimm’s iconic alter ego, The Thing. “We did all of the initial development with [production visual effects supervisor] Scott Stokdyk and [visual effects producer] Lisa Marra from Marvel, in collaboration with [head of visual development] Ryan Meinerding. Ryan provided us with the concept for The Thing, which is what we based our work on,” Bigi relays. As the leading vendor for The Thing, ILM developed the entire character and then distributed the asset to the film’s other visual effects vendors for their own sequences.

“After the initial development of The Thing, we were assigned another prominent character to build. Since ILM had several shots in which Mister Fantastic stretched his body and used his ability in an extreme way during the final battle, ILM ended up leading the look development of Reed Richards, too,” Bigi explains. In January 2025, ILM’s success with these character creations prompted Matt Shakman to task Bigi’s team with crafting the Fantastic Four’s immense nemesis, Galactus.

“Another big component to ILM’s work was the development of New York City, which was an imaginary version of it based on Marvel concept art,” Bigi continues. “Roughly 90% of the New York City shots were done in computer graphics by ILM. It’s a 1960s futuristic New York, and while certain aspects appear exactly like our New York, there are many buildings and stylistic elements that reflect both 1960s and futuristic designs. A large section of the city, including Times Square, was ingested from Sony Pictures Imageworks, whom ILM collaborated closely to combine different city blocks into a unified layout with a matching style, color palette, and overall look.” Most of the city set-up was handled by environment supervisor Stacie Hawdon and CG supervisor Tobias Keip at ILM’s London studio. In total, Bigi estimates that ILM contributed between 350 and 380 shots to The Fantastic Four.

Thinking the Thing Through

“At ILM, we aimed to deliver on Matt Shakman’s vision by dramatically changing what had been done with The Thing in the past. We sought to create the most believable, realistic performance that would respect Jack Kirby’s original design, from the size of the rocks to the very specific rock formation of The Thing’s brow,” Bigi shares. Animating facial expressions for a character whose face is composed of rock proved to be a considerable challenge. “We explored different options, but I always wanted to keep the rocks as rigid as possible. If we started to squash and stretch them, The Thing would resemble what was done in the past with plastic material and foam prosthetics.”

Leaning into The Thing’s bouldery frame, Bigi’s team created small, undefined gaps between the rocks. “Depending on the expression, we could move the rocks in these minuscule spaces. Additionally, we allowed the rocks to gently stretch in areas that were invisible to the camera, giving us larger gaps that let us keep the rest of the rocks completely rigid.” ILM employed another sophisticated technique for The Thing’s face and body, running an effects simulation on the rocks rather than dealing with geometric skinning. Bigi praises FX and creature technical director Maybrit Bulla, who used Houdini to create a custom setup to control the collision between the rocks. “We used our blend shape technology to move the underlying surface, but there are rocks on top of it that are actually colliding. They push each other and land in a natural position. In some shots, we had to guide the simulation in an artistic manner to avoid having rocks go into unwanted territory and seem weird or strange. The process is something new that we developed for this movie.”

When it came to actor Ebon Moss-Bachrach’s performance capture for The Thing, ILM referenced the work-in-progress geometry data from Digital Domain (another effects vendor on the film). “The data was useful for the initial stages and the blocking animation, but when we started to go into the minutiae with Scott Stokdyk and Matt Shakman, we ultimately worked on our own system and reanimated the character for our final animation,” Bigi details, crediting CG supervisor Marco Carboni for developing a workflow to quickly ingest data from Digital Domain and transfer it to ILM’s proprietary facial rig.

Rules for Reed Richards

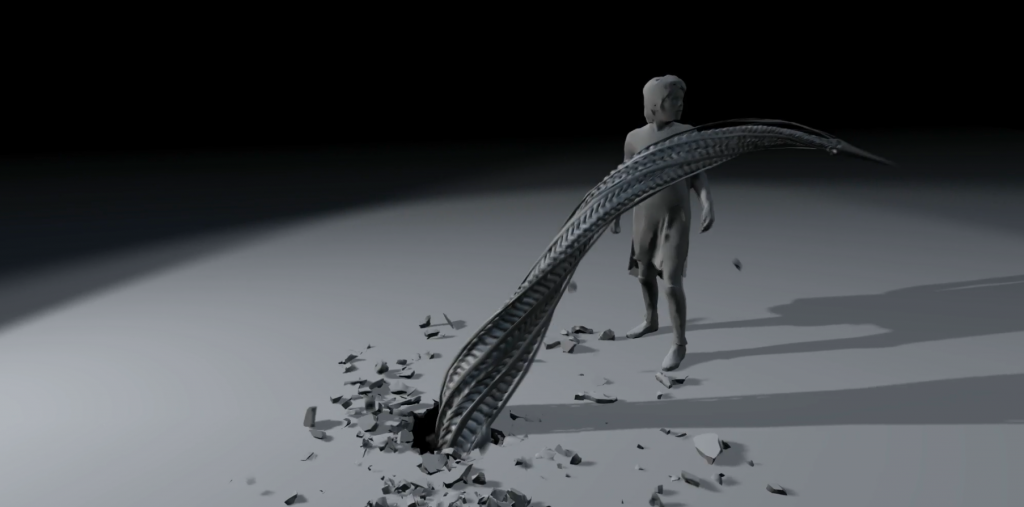

Alongside Shakman, ILM outlined clear guidelines for Reed Richards’s capabilities as Mister Fantastic. “Matt was keen to avoid creating what we called a ‘noodles’ or ‘spaghetti’ feeling. How we controlled the stretch was unique and based on Matt’s vision,” Bigi recalls. “Instead of developing the character for months and then realizing that it didn’t behave in the right way, I proposed exploring various 3D action poses with extreme body stretch from several angles. Matt was incredibly receptive to the notion of rendering these static frames before having a functional rig or muscle simulation for the animator to use.”

Setting rules for Mister Fantastic became essential to ILM’s process. “What can Reed do? Do we want to stretch the neck, or don’t we? We decided not to, so there’s not a single shot where you see the neck stretching a lot,” Bigi notes. “We established a rule that only Reed’s limbs would stretch, meaning his upper torso and shoulders would remain the same width as the actor’s. Another rule dealt with his bone structure. While stretching, his elbows and knees would be more defined, the idea being that the skin was getting thin and wrapping around the bone. This was all discussed with Matt and Scott and developed in the initial stage where we did our 3D maquette action poses.”

Bigi took inspiration directly from Marvel’s comic books, as well. “Many comic book artists before us, in particular Alex Ross, maintained a very strong V-shape when portraying Reed’s upper body. So, in the ILM shots where Reed is stretching, we kept the lat muscles on his body fairly large, like an athlete or swimmer,” Bigi declares. “We also decided Reed would snap his limbs back to a natural pose relatively quickly. The thought was that it wasn’t easy for Reed to stretch, so he would only do so on important occasions. He doesn’t do it for fun, at least in this movie.”

While Reed’s arms and legs stretch extensively, Bigi points to another key decision ILM made when generating the look and feel of Mister Fantastic. “The stretch of his fingers is minimal, and the gloves you see are usually the normal size as established by the practical costume designer. The concept being that, unlike the fabric close to his body, the actual fabric of the gloves didn’t need to stretch at all.”

Seeing Sue Storm

As was the case with The Thing, ILM pursued a unique path to conveying Sue Storm’s abilities in the final battle. “Rather than relying on particle simulation, all of ILM’s Sue effects were based on optical elements,” Bigi reflects. “The Sue effects were meant to be analog, in a way. There are no effects simulations of any kind. Most of those shots were crafted by ourcompositing team, so it’s a 2D-based approach using references of how lenses naturally create refraction and color variation. You see that we enhanced and exaggerated the prismatic fringes that occur with specific types of lenses.

“Although this route was simple in a technological sense, it was nevertheless quite effective visually, and blended well with the atmosphere of the movie,” Bigi concludes. “Going with the latest, state-of-the-art technology is not always the answer. In this case, it was the opposite. We wanted it to feel simple and analog, so we stayed with the real optical effects. It’s all about what the director wants and the feeling you wish to convey.”

Grappling with Galactus

Unlike the challenges that ILM tackled with The Thing’s rocky features, the surface of Galactus’s face resembled the actor to a much greater extent. “We were able to use Ralph Ineson’s performance through a normal blend shape technique for Galactus’s face. Matt wanted to infuse Galactus with a god-like aspect, so he had us downplay the realistic human aspect and micromovements of the actor’s face. We reduced the range of motion and kept the face a bit firmer,” Bigi states. “For the body, we received a scan of the beautifully-constructed costume, but at the end of the day, ILM replaced it with CG in all of our shots because of its need to appear metallic.”

Representing Galactus’s true scale also came into play. “We determined a specific height for Galactus, so the camera had to conform to that size. There are several shots with plate photography, but the majority was done digitally, especially due to the interaction between Galactus and the city,” Bigi reports. “Galactus’s body had to be covered with thousands of tiny lights, which couldn’t be done realistically with prosthetics, and he’s so large that the amount of detail necessary to set the scale was tremendous. We scattered literally millions of tiny pipes, greeblies, and geometric objects to increase the sense of scale. At a distance, our Galactus was the same as the costume, yet it was much more elaborate in the extreme close-ups.”

ILM held conversations with Matt Shakman and Scott Stokdyk about the bridge devices that serve as a centerpiece for the climactic conflict with Galactus. “We developed an effect that we called ‘bridge effects,’” Bigi notes. “The bridge is an amazing device that – spoiler alert – Reed conceived to transport Galactus to another location in space. Because of the 1960s style of the movie, we avoided a digital quality for the portal. We found references and simulated optical effects rather than calling upon inspiration from the digital world. It was a real brainstorm with Matt and Scott. All sorts of ideas, such as having Galactus’s body stream with particles inside the bridge effects, came up in our conversations with Matt.”

A “New” New York

In preparation for depicting Earth-828’s New York City, Bigi traveled to New York for a 10-day shoot with The Fantastic Four’s second unit. “It was an amazing experience,” Bigi beams. “Based on the previs, there were certain shots we knew would be CG, but we tried to film as much as possible. Before going to New York, I used a combination of Google Earth and other digital resources to virtually scout Manhattan and propose methods to capture it from specific locations in a thorough fashion. I spent days capturing 360 HDRI panoramic views, mostly along 42nd Street, that construct a library of texture and material references. At the same time, a small team from Clear Angle Studios scanned the entire road using a LiDAR [Light Detection and Ranging] scan.”

The work continued upon Bigi’s return to London. “Initially, we took the images of New York and removed all the buildings constructed after the 1960s. It was essentially a filter that permitted us to show this version of the city to Matt and Scott,” Bigi remembers. “Then, in collaboration with [production designer] Kasra Farahani and Scott, we drew inspiration from futuristic-looking buildings elsewhere in America, such as Chicago. We selected preexisting real-world buildings that had rounded shapes and concrete bases. Another selection was done by concept artists at Marvel who had come up with original designs.

“My team at ILM modeled those buildings, and we set their number and location along the street. We built several layouts and versions, gradually shaping the features of the street. That aesthetic relied on the props, as well,” Bigi asserts. “The cars and billboards resemble those from the 1960s, and we scattered spherical water tanks around the city. The phone booths aren’t based on their 1960s counterparts, as they were designed specifically for the movie. From the skyscrapers down to minute details like the color of the phone booths, everything is either a combination of real 1960s references or the artistically-driven futuristic elements that are now synonymous with the film.

”The time and talent that ILM invested in The Fantastic Four has paid off for both the artists involved in the project and audiences around the globe. Upon seeing the final cut, Bigi gravitated towards one of ILM’s shots when ranking his top stand-out moments from the project, declaring, “There are several moments that I love, but for me, Galactus emerging from the water and entering Battery Park from the river is my favorite. The water simulation and the composition combine to create a wonderful shot to begin that sequence.” Applauding the work of compositing supervisor Juan Espigares Enríquez and his compositing team, Bigi concludes, “I think it’s one of The Fantastic Four’s most exciting and spectacular moments.”

—

Jay Stobie (he/him) is a writer, author, and consultant who has contributed articles to ILM.com, Skysound.com, Star Wars Insider, StarWars.com, Star Trek Explorer, Star Trek Magazine, and StarTrek.com. Jay loves sci-fi, fantasy, and film, and you can learn more about him by visiting JayStobie.com or finding him on Twitter, Instagram, and other social media platforms at @StobiesGalaxy.