Visual effects supervisors Jay Cooper, Andrew Roberts, Charmaine Chan, and Ian Comley take us behind-the-scenes of an unusual visual effects challenge.

By Lucas O. Seastrom

Ever since George Lucas and John Dykstra sat down in 1975 to discuss the former’s vision of capturing dynamic aerial dogfights between miniature spaceships in Star Wars: A New Hope (1977), Industrial Light & Magic (ILM) has made an art of solving creative problems in close partnership with filmmakers. As Lucas’ vision challenged ILM’s capabilities nearly 50 years ago, The Creator (2023) writer/director Gareth Edwards proposed an unconventional approach to filmmaking that would keep the visual effects crew on their toes.

Proof of Concept

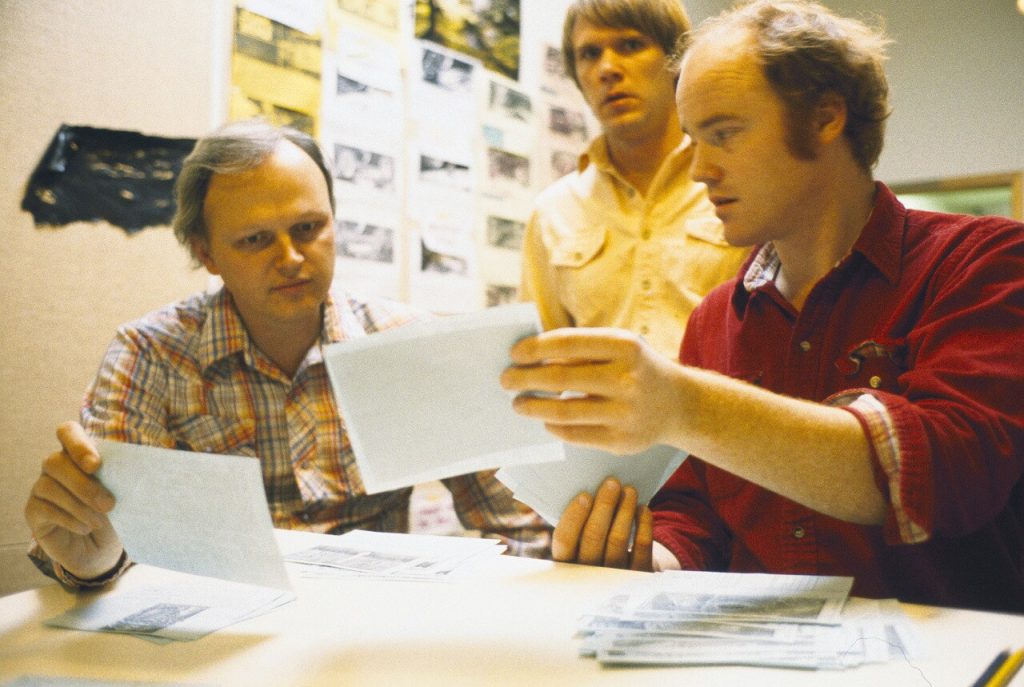

Edwards first collaborated with ILM on Rogue One: A Star Wars Story (2016), channeling the same rebel spirit of Lucas’ A New Hope. Envisioning his own science-fiction tale in The Creator, he would also channel Lucas’ audacity for pushing the limits of ILM’s capabilities. It began some years ago when he asked ILM’s executive creative director John Knoll (who supervised the visual effects for Rogue One) if the company was able to assist with a test reel that would demonstrate Edwards’ vision for a movie about a futuristic Earth where humans and artificial-intelligence lived side-by-side.

“Gareth and his producer [Jim Spencer] went to Asia on what he described as a scout, but he also brought a camera along,” explains Jay Cooper, who would become The Creator’s overall visual effects supervisor for ILM. “He shot in a number of different locations to create a sort of think-piece, very documentary-style footage. Then he came to us asking to put some 50 shots together, which John supervised.”

Edwards provided his footage only. There was no accompanying data, no lidar scans, no HDRI captures of environments, none of the usual resources that visual effects artists rely upon. The challenge was to integrate digital elements – characters, vehicles, and locations – into the existing footage, including the replacement of real people, or components thereof, with robotic technology. “We tried to create rapid prototypes of what shots could look like by doing them in a more heavily-2D way,” Cooper explains. “We’d take frames, do a draw-over with James Clyne, who became the film’s production designer, and with a bit of fast projection work, get them into shots. We got a really convincing look with a modest amount of effort. Gareth explained that shots that usually take two or three months of work could be seen in three or four days.”

The proof-of-concept not only sold Edwards’ backers to make the film, but gave ILM a model for developing effects on a feature-length scale in this unusual, after-the-fact method. The Creator would be shot primarily on location in Thailand with a small crew and fewer resources. “Gareth wanted to shoot this ambitious movie,” says Cooper. “The artwork was phenomenal, but the catch was that we’d be really uncomfortable because we wouldn’t be given the things we were used to. We wouldn’t stop for a clean pass every time. We wouldn’t always know what all the shots we’re going to be because those would be determined in the edit. There were enormous designs for the scope of the movie. This was a big swing, it was sink or swim. So off we went.”

On the Ground in Thailand

Edwards remained committed to maintaining a fast, improvisational shooting style, often handling the camera himself. He would not inhibit his ability to engage in the moment with his actors, who included John David Washington as the protagonist Joshua, a world-weary soldier in search of his lost love, and Madeleine Yuna Voyles as Alphie, an artificial simulant in the form of a young girl who acts as both the story’s heroine and MacGuffin. Instead of the usual small team of visual effects personnel, ILM would send just one representative to Thailand, visual effects supervisor Andrew Roberts.

Roberts would be responsible for both consulting with Edwards and crew, including cinematographers Greig Fraser and Oren Soffer, as well as capturing as much data for each respective shot as he possibly could. “I was there to help make sure that things were filmed in a way that would give ILM the best chance of producing great, photoreal work,” Roberts says. “I wasn’t going to get in Gareth’s way.

“Early on, we had scenes with robots and humans existing together,” Roberts continues. “I asked Gareth which of the actors would be made into robots so I could mark them. Even if we’re not putting them in the motion-capture suits, I could take measurements and make a turntable, all to give the team information. Gareth looked at me and said, ‘Don’t know.’ It wasn’t something he wanted to focus on. He would pick actors to make into robots later. I wasn’t sure how aggressively he was going to create negative space with these characters. It turned out that their bodies were more or less the same, and you’d mainly see the mechanism when it came to their arms and their heads. But I still didn’t know at the time, so I recorded the information about where Gareth was pointing the camera and determining what backgrounds I needed to capture to reconstruct a clean plate.”

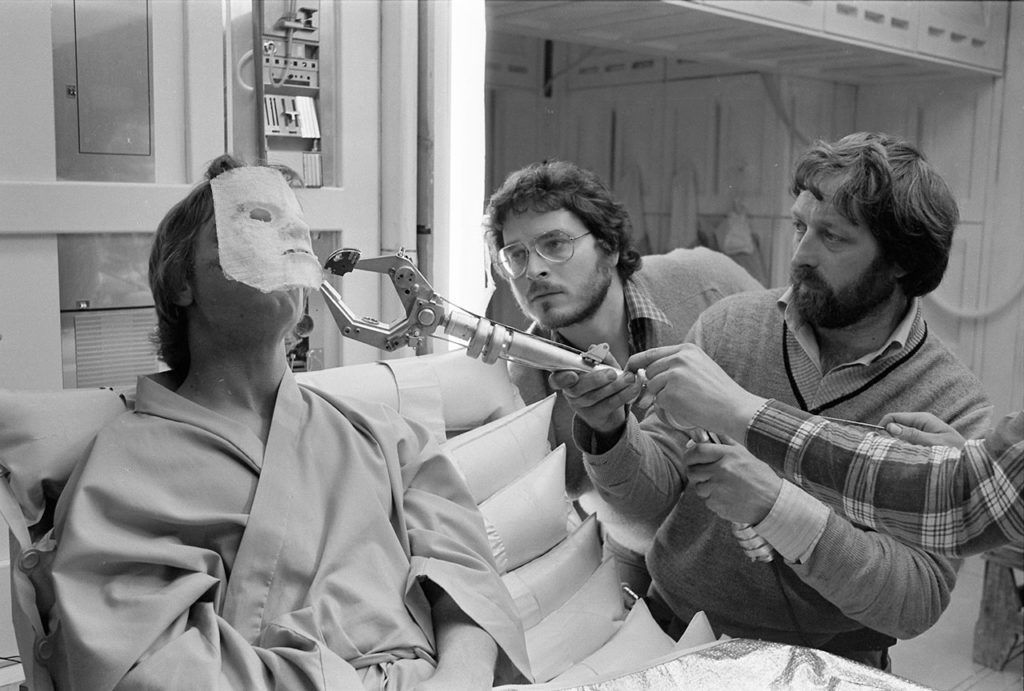

Another major challenge involved the simulants, A.I. characters who appear human, save for the aft portion of their heads, which feature a bold mechanical structure. Edwards and Clyne had created initial concept art, but it was left to Roberts and Cooper to determine the best means to track the live actor’s facial movements onset in order to integrate digital components during post-production.

“I think the movie doesn’t work at all if you can’t get a convincing Alphie,” says Cooper. “It’s where your eye is looking. There are 400 shots of her. In prep, I pitched the idea of putting a sock over her head and dressing the edges of where the contours are so that we know exactly how to define the delineation point between where her mechanical components connect to her skin. I asked about doing makeup to address the edges, and we could fill in the rest. Gareth said, ‘No, we’re not going to do that because I need her.’ When you’re working with a child actress, there’s only so many hours you can work. He wanted her onset for every minute she could be.

“Then we had to figure out what we could do in terms of tracking dots that were low impact and didn’t interfere with the acting,” Cooper says. “I explained the ask to [layout supervisors] John Levin and Tim Dobbert, and said that I didn’t know exactly what the designs were going to be, but they said, ‘Well, let’s put some tracking dots on the bridge of her nose, one on the temple, a couple on her neck, and we think we can figure that out.’ So that’s what we did! [laughs] It’s a leap of faith.” Roberts then collaborated daily with the makeup department to place tracking dots on the simulant actors, each of whom required a unique arrangement because of their varying physiques.

“The benefit of having someone like Gareth is that he used to be a visual effects artist and he has a clear idea of what the end result will be,” explains Roberts. As an example, he explains how Edwards shot an early moment in the film when Joshua watches the suborbital ship NOMAD launch a missile at a group of small vessels just offshore. “Gareth knows that he wants NOMAD to be in frame, so he’ll frame for it and then tilt down to Joshua watching from the beach. Another director might be focused on the action in front of them, and in post they’ll ask if we can extend the frame and create a digital move. The majority of directors don’t think about those things in advance. So when I’m observing a shot like that where Gareth is tilting the camera, I’ll wait for the cut and ask him, ‘During that tilt, what are you seeing?’ Then I’ll make notes.”

After crisscrossing Thailand, often covering multiple locations in a single day, cast and crew traveled to Pinewood Studios in the United Kingdom, where ILM had contstructed a StageCraft volume as part of its virtual production toolkit. There, two major sequences were captured for the end of the film, when Joshua and Alphie board NOMAD. “It takes a lot of work to do StageCraft correctly,” notes Cooper, who used the tool for the first time on this show (as was the case for Edwards). “You have to be very careful that it’s the right fit. If I think about our goal as a movie, which was to always find real locations, there were only a couple of places where there was no equivalent location, and that is space. It made a lot of sense to use StageCraft for the NOMAD’s Biosphere environment and the Air Lock, where either the scope is so large that it would be cost-prohibitive to build a physical set, or the aesthetic goals would push you into doing a full bluescreen shot.”

A few smaller scenes were shot on an adjacent Pinewood stage equipped for traditional bluescreen or greenscreen, but as Roberts points out, the crew took the chance to innovate some distinct techniques. We had a scale portion of the missile that Joshua climbs on,” he explains. “We created interactive lighting for that by taking portions of the real-time NOMAD model from Gareth’s virtual production scouts, and animated them to enable these mechanisms pushing missiles into place. I had this little animated sequence, which I then rendered out as a black-and-white texture that had different layers of structure moving past, which imagined that the sun was out in space and these things were casting shadows. We connected that from my laptop to a 12K projector that was mounted on the set. So when John David is hanging on the exterior of the missile, we have real light interacting with him in the close-ups. That evolved quite organically.”

Altogether, 2022’s principal photography lasted some 80 days, not including an additional round of element shoots and pick-ups led by Edwards with an even smaller crew across multiple Asian countries.

A Global Collaboration

ILM’s studios in San Francisco, London, and Sydney would each make significant contributions to The Creator, with additional support from the Vancouver studio and an array of vendors. In October of 2022, Edwards came to ILM San Francisco to screen a three-hour cut of the film. “Everyone came out recognizing that it was something different and special,” recalls London-based visual effects supervisor Charmaine Chan. “It was a lot more than we thought it was going to be. Originally, we estimated around 700 or 800 shots. Watching that cut, we knew there were more than twice as many. So the question was how to handle that and deliver on time, on budget, and at the quality we always want at ILM. We had to set guidelines with Gareth about how we’ll be able to get this film across the finish line, and he was very receptive to it.”

Cooper’s proposal was an unusual “three-strike system,” where Edwards would be given three opportunities across the life of a given shot to provide notes, allowing ILM to iterate with as much focus as possible on the key elements of that shot. “That’s the optimal structure to ensure that all the money goes into getting a clear direction for the shot,” Cooper notes. “We were probably only successful doing that about 70% of the time, but there were a healthy number of shots where, after we solved the questions about the simulants, for example, Gareth knew that if we kept to those standards, we wouldn’t be chasing really small details.”

The design evolution for the simulant head mechanics resulted in an elegant approach that felt almost human. Building on techniques first employed by ILM for The Irishman, the team was able to seamlessly blend the movements of the actor’s skin with the rigidity of the rear components. “We’re trying to empathize with these simulants and understand what they’re going through,” explains Chan. “When you first see one, it’s just another human being, then they turn to profile and you realize it’s something else. Because the performances are so good, whether it be Madeleine or Ken Watanabe [Harun], you’re focused on them and feeling their emotions and you forget about all that gear.”

Many subtleties were incorporated into the headgear to complement the performances, including character-specific details, such as the battle-worn tech of Harun’s components. The animation team were responsible for creating tiers of almost subliminal movements that reflected each simulant’s emotional state. “When Alphie stops the bomb robot, for example, that’s full pelt as the mechanics whir up, which includes wonderful sound effects,” explains Ian Comley, also a London-based visual effects supervisor. “For everything else, it’s a kind of Swiss watch, cogs and gears ticking, something always active, but in a more gentle way.”

Throughout post, the ILM crew enjoyed an extraordinary level of direct access to the director and production designer. “It can be pretty rare to feel like you’re a core member of the filmmaking team,” says Comley. “I can’t think of another film where the production designer has stayed on until the very last shot. To build every robot, simulant, vehicle, prop, and structure required a lot of design. Gareth knew what he wanted and had a great relationship with James, and we were a part of that. We took initial concepts from James, tried to riff off them, and fleshed them out into assets. We could then share back directly with James, who could go in and do paintovers. With direct access, there’s no diffusion of ideas. Instead, it’s collaborative filmmaking.”

To create the full-body A.I. characters as replacements for select live actors, ILM developed seven distinct robot designs following Edwards and Clynes’ visual methodology, which combined a 1980s technology aesthetic with organic, natural influences. Each design could be made unique with specific flairs, often informed by the individual character, such as with Amar Chadha Pata’s performance as Satra.

“Amar is very expressive in his face,” explains Chan. “When he’s talking or thinking, his eyes and eyebrows say a lot. We captured that in the actor, but how do we present that in a robot? [Animation supervisor] Chris Potter was brilliant in suggesting all of these fine details, like in the eyes, which are very tiny on Satra. You can see a slight pupil and see eye darts when he’s thinking. The mouth was also slightly hinged, so all these little characteristics of Amar’s performance can come into this robot to show his emotions.”

Comely points out the “masterstroke” of Edwards’ decision to not decide on the robot characters while filming. “Even when it came to background characters, a typical film would decide who would be a robot and kit them up in mo-cap pajamas,” he explains. “None of that on this show. Gareth got naturalistic performances because people were just moving as people. If it was a scary scene, they acted scared with those fluid motions. No one had been told they were a robot and then acted twitchy or jittered, the kinds of things you might do.

“It also gave ILM license to switch out anyone,” Comley continues. “If he had picked someone onset to be the robot, it might turn out that that person isn’t located where your eye goes in the shot. The real person you want to be a robot is on the other side. We had the freedom to do that, which was a real challenge, but we could decide with Gareth after the fact which ones to choose. As shots changed, we could keep adjusting. The matchmove and paint teams did a fantastic job. The performances were so grounded, and we did very little to change that. The last thing Gareth wanted was for us to take a brilliant natural performance and turn it into a stereotypical robot. It was mostly heads and arms. There are instances with full-body robots, but by and large, they were additions instead of replacements.”

The London crew under Chan and Comley’s supervision spent considerable time on act three aboard the NOMAD, where the key challenge was to create fully-CG assets and environments that felt akin to Edwards’ naturalistic shots on real-world locations. On a typical show, ILM often incorporates grain or lens flares to match the source photography, but for The Creator, they also helped influence creative decisions to help bridge the divide between Earth and space, including moving the NOMAD into lower orbit where more diverse colors and atmospheric elements could be incorporated. “It helped marry the story points where people on the ground are able to see NOMAD above,” as Comley notes.

Even in a traditional CG scenario, ILM found ways to empower Edwards’ freeform shooting style. “Besides the real-time rendering and LED walls, the StageCraft suite also includes virtual cam sessions,” explains Chan. “The whole exterior of NOMAD was pure CG, so Gareth was able to hold an iPad and look around to see the different sections of the ship and frame his shots, from the wings to the central section that we called the ‘bunny teeth.’ We saved so much time with Gareth being able to do that, rather than having us propose specific framing ideas. With Gareth being a visual effects artist, he just grabbed it and started making choices.”

At times, Edwards even embraced the most ordinary of methods to convey his vision. For the sequence when Joshua attempts to climb onto one of NOMAD’s towering missile silos, the director provided reference footage by “taking a wastepaper bin with a water bottle inside for the missile and a little LEGO figure taped on,” as Comley explains. “He shot it all with his iPhone. It had the same principles of photography that he’d applied in the v-cam. You have to feel like there is an operator discovering the events as they unfold. Gareth’s philosophy was often to think that the operator was hanging out of a fast-moving plane because the NOMAD is so big, that’s the only way you could do it.”

By the spring of 2023, ILM had completed some 1,700 shots for The Creator (a handful of which came from Edwards’ original test reel). “We made some good choices in terms of how to build this whole train set,” explains Cooper. “Maybe the most important one was that James Clyne had a concept team all through post-production. In visual effects, it can get expensive area is when you don’t know what you want, and you iterate multiple times and change directions. Normally there’s a bunch of concept art and you spend your time chasing that. We had existing concepts, but once the movie was shot, James kept reinterpreting it. When we’d land on an idea, we already knew the shot, the camera work, and we could deploy our resources accordingly. Sometimes it’s a 3D asset that we build because it’s going to be in 40 shots. Other times we take the art model from James’ team, put it into the shot, they paint on top of it, put it back in the shot once more, and it’s done. Not standard procedure at all. It’s all about looking for those opportunities.”

Looking for the Next Challenge

The Creator’s unconventional production methods were successful not only in terms of the efficiency of its budget and resources, but in the ability of the artists on every level to make genuine contributions to the story. That came from Edwards’ example and leadership. “Everyone wanted to be on this project to the point where someone would roll off the show and keep asking if they could do one more thing on a shot, just to make it a little better,” says Chan. “Sometimes you can feel like a cog in a machine, just pushing buttons, but this was the opposite. Everyone on every level felt that they could be creative and suggest ideas.”

ILM was established to create solutions that respect the integrity of a filmmaker’s original vision. For an artist like Comley, the willingness of the filmmaker to include ILM in that visionary process is much more important than the actual problems to be solved. “One way or another, we can paint out that thing, track that thing, come up with a creative solution,” he notes. “Throw us anything you have. I’d rather have that and the vision and richness of photography than a clinical greenscreen and a question mark.”

It was a refreshing experience for everyone, but one critically dependent on the filmmaker. “You have to be willing and able to take this gamble, and it’s hard to find both things together,” says Cooper. “There are a lot of filmmakers that are willing but because of the studio constraints around them, they’re not able. And there are others who have the money and space to do it, but don’t necessarily have the amount of knowledge required. So if you can clone Gareth, you’re in a great place! [laughs] I think there will be opportunities to work like this again. Filmmakers will come to us and say, ‘I know what my movie is, I have so many dollars, and we don’t have to hit everything that I want, but I want to hit as many as I can – can we work together?’ As a company, we’d respond well to that.”

—

Lucas O. Seastrom is a writer and historian at Lucasfilm.