iLM visual effects supervisor Vincent Papaix and Nerfstudio creator Matt Tancik discuss their innovative approach to visual effects shot design.

By Lucas O. Seastrom

At this year’s HPA (Hollywood Professional Association) Awards for Technology & Innovation, Industrial Light & Magic and collaborator Nerfstudio took home a win in the Innovation in VFX, Virtual Production & Animation category. Embracing a new kind of open source toolset allowed ILM to recreate visual effects shots for Marvel’s 2025 series Ironheart at a degree of efficiency that greatly outpaced established techniques. The key was “NeRF,” or neural radiance fields, a method that allows 3D photorealistic environments to be created from a sampling of real-world 2D photography.

A Catalyst from Marvel’s Ironheart

ILM visual effects supervisor Vincent Papaix faced an interesting challenge with a handful of drone-based shots from Ironheart, wherein the series’ namesake flies over Chicago’s lakeside waterfront and river district. The fast-flying CG character had to be integrated with the live action plates shot on location. “They decided to film with the drones in a very slow way, thinking we could retime the footage,” Papaix explains to ILM.com. “Typically, you might retime at 200% or 300%, but in this case it was over 1,000%. The character’s movement needed to be very, very fast. Traffic would have to be replaced. When you’re filming at normal speed with the drone, you don’t get the sense of the micro-movement, but at high speed, you could see the high-frequency movements of the camera.”

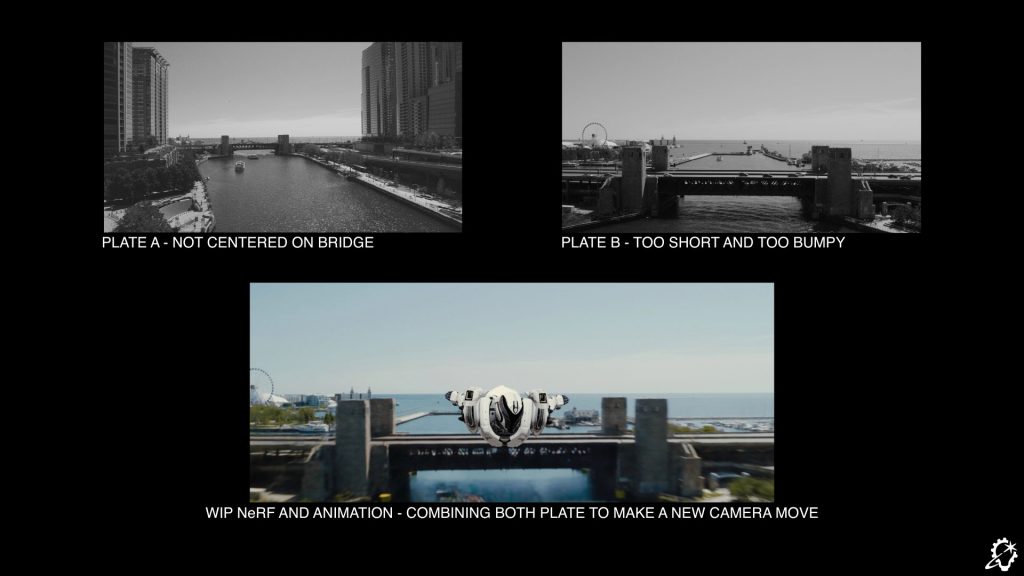

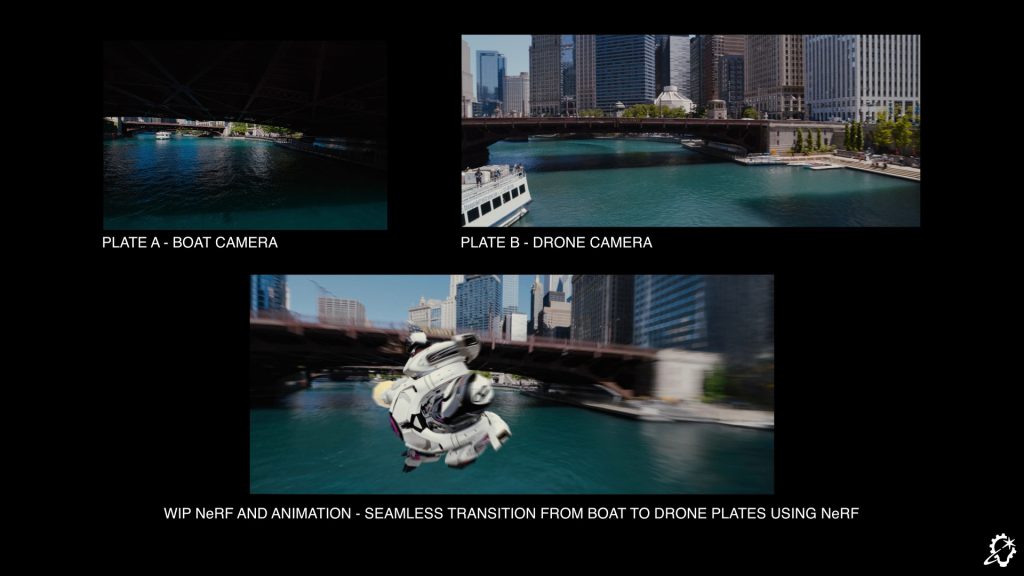

The visual effects team needed to recreate the desired camera moves while maintaining the appropriate view of the live action background plate. Normally, they might attempt a 2D stabilization of the image, but in a case like this, the sense of depth, or parallax, made for difficulties in trying to stabilize both the foreground and the background at the same time. They considered recreating the entire world in CG, traditionally modeling, texturing, and shading every detailed aspect of the Chicago setting. But with an episodic production schedule, the necessary resources and time required would be prohibitive.

Papaix decided to begin what he describes as a “pet project,” researching how NeRF models could be applied to visual effects work. At first there was no guarantee that his inquiries would yield results, but then he discovered Nerfstudio, an open source program that provided an end-to-end workflow for developing 3D environments from 2D photography.

Nerfstudio creator Matt Tancik began his research in developing neural radiance fields as a PhD student at the University of California, Berkeley. “People wanted to experiment and see how much they could push this technology,” Tancik says. “It became obvious that there was a desire for this research to make it into the industry field. But there wasn’t an easy way to do it because it was kind of obtuse research code at the time. The Nerfstudio project was about trying to see how we could wrap it up into something that looked more like a product, and fully open source, so that other people could start playing with it.

“And most notably,” Tancik adds, “people could help build upon it. A lot of the research projects that we saw coming out of NeRF acted like modules attached to NeRF to make it better along one axis or another. It made sense to try to collaborate as much as possible. The Nerfstudio project was a step towards doing that, and that’s when Vincent and ILM started playing around with it.”

The Function of “NeRFs”

But how exactly do neural radiance fields help empower artists like Papaix and his colleagues to work more efficiently? As Tancik explains, it’s a process that seeks to forego the traditional CG methods that involve the complex, often laborious craft of representing photorealistic imagery as meshes and triangles with applied textures and lighting. “All of that takes a significant amount of effort to make it photoreal, and in some cases, it’s almost impossible,” says Tancik. “That’s not for the lack of people trying to make these methods easier and easier. The goal of NeRF was to essentially see if we could use machine learning to accomplish the same thing. Instead of manually placing these triangles, can we have an algorithm construct these things from photos? So then the work becomes capturing many photos of a scene and converting them into a 3D representation.”

The result is a new approach to storing the corresponding data. Instead of triangles mapped within the CG model, NeRF uses individual points in space, each assigned a specific color. “When you look out into space, you’re shooting out into the scene and seeing what points you hit, and you’re noting which direction you’re hitting that point of space,” Tancik notes. “A single point in space, whether I’m looking at it one way or another, might look a little different. By describing the scene like this, it fits really nicely into optimization techniques that we can use to fit that to an image.”

ILM’s Practical Application

Working with former ILM research engineer Sirak Ghebremusse and former ILM pipeline technical director Kevin Rakes, Papaix oversaw the effort to adapt Nerfstudio’s functionality for visual effects. Both a new encoder and decoder were required to help translate information between Nerfstudio and ILM’s other tools, which ensured the team’s ability to maintain a certain amount of precision with color and image range.

Similarly, the team needed to process the environments into real-world imagery that could be measured in feet, so Tancik himself created a new file format to aid the transition. That also required the development of new “gizmos” – a group of various nodes of information – within the compositing software Nuke, which allowed the artists to move seamlessly back and forth between the Nerfstudio render and the final effects work.

“We can work with standard layout and animation in feet, then go into NeRF, import any camera we want, render it through Nerfstudio, and bring that camera move back with us into the Maya or Zeno file,” Papaix notes. “It was key to have that ability.”

As the process evolved, ILM was able to apply these new capabilities in multiple ways. They could stitch a seamless transition between two separate camera views over the water, one captured by a drone and another from a boat, all without the need to create a new CG environment. Entire objects, such as street traffic on a bridge, could be removed. And because they were able to maintain parity between their visual effects environment and the Nerfstudio-rendered space, they could develop entirely new camera paths at the request of the filmmakers.

“We could create a new smooth camera move, basically art direct the exact move that we wanted, and then show that to the director,” Papaix says. “They were very happy. They didn’t think it was possible to change a camera move using an original plate, but we did. They said it was like magic to them. People were curious. Did we project the plate onto geometry? Did we model the whole city? No, there’s no modeling or anything.”

Now with Greater Accessibility

Papaix is keen to note that at the time ILM collaborated with Nerfstudio – in 2022 and ‘23 – these methods were still considered experimental. “Very few people were putting this kind of stuff into production. There was a lot of research taking place, but Matt showed how this could be useful, and ILM took it and made it production-ready.”

Tancik himself adds that “I’ve always been interested in the visual side of things, and hoped to get to that point, but didn’t know if the concept would ever actually make it there. It was not an easy thing to run. You needed a lot of computing power and GPUs. It didn’t feel like it was there yet to be useful in industry or productions. So watching Vincent and ILM put it into practice was really fun.”

Today, the use of neural radiance fields, as well as another similar outgrowth method known as Gaussian splat, is continually on the rise with increasing efficiency in computing power. “This was a science paper a few years ago, and now it’s making its way into all of the software that we use,” Papaix says.

“With the move to Gaussian splat, if I had to do those shots today, I could probably do it from start to finish in only a few days, compared to the months that it took before,” Papaix concludes. “At the time, it took about six months because it was more of a research project off and on, a side project. Now that we understand the tech, we can optimize things, and we can do things much faster. The tech improves so fast. We’re still in the early days of learning how these techniques will be applied.”

Watch the full demonstration reel:

Click here to read more about Nerfstudio.

See the full list of winners from the HPA Awards for Innovation & Technology.

—

Lucas O. Seastrom is the editor of ILM.com and Skysound.com, as well as a contributing writer and historian for Lucasfilm.