San Francisco News

Unearthing the Past: How ILM Brought ‘The Dinosaurs’ to Life

Artists from ILM’s London studio, including visual effects supervisor Steve Moncur, animation supervisor Stafford Lawrence, and CG supervisors Christian Waite and Elizabeth Mitchell, discuss their team’s work on the Netflix series.

Redefining the Grid – ILM’s Vancouver Team Talks ‘Tron: Ares’

Visual effects supervisors Vincent Papaix and Abishek Nair join animation supervisor Mike Beaulieu to discuss bringing the Grid to the streets of their very own city of Vancouver.

Inside the ILM Art Department: ‘Stranger Things’ Season 5

Artists from the London and Sydney studios discuss some of their favorite work from the Netflix production.

ILM Journeys Into the Upside Down: The Visual Effects of ‘Stranger Things’ Season 5

Visual effects supervisor Bill Georgiou and associate visual effects supervisors Stephen Tong, George Kuruvilla, and Arnab Sanyal discuss ILM’s visual effects contributions to the final season of Netflix’s hit series.

Fin Argus Suits Up as Volo Bolus in ‘Star Wars: Beyond Victory’

The actor who voiced and supplied the motion capture performance for Volo Bolus discusses their collaboration with ILM for the mixed reality playset.

Kill, Kill, Kill: Bringing the Rampaging Boar to Life in Sam Raimi’s ‘Send Help’

Industrial Light & Magic artists Marc Whitelaw and Maia Kayser discuss the studio’s work on director Sam Raimi’s Send Help, and how its rampaging boar sequence evolved into something bloodier and funnier.

ILM Wins BAFTA TV Craft Award for ‘Andor’

The team joins their Lucasfilm counterparts in the win for Special, Visual and Graphic Effects.

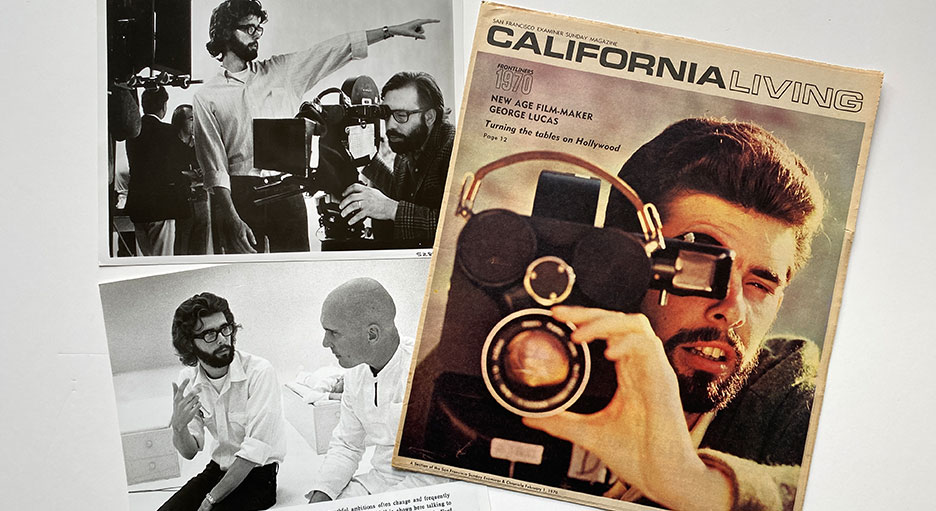

Honoring Lucasfilm’s 55th Anniversary

In the spring of 1971, George Lucas quietly established a new company to carry his future projects.